Seedance video orchestration — multi-agent drama to MP4

MultiModel Hackathon Audience Favorite: four-step Writer/Makeup/Director/Seedance API with Butterbase, ModelArk, and ffmpeg—personal drama to demo-ready video.

Tech Stack

Opening note

This project won Audience Favorite ($1,000 on site) at the MultiModel Hackathon with Beta Fund—a Beta Hacks–style build day with tracks around AI video agents, content automation, and dev infra. Our angle was simple to pitch but heavy to ship: turn a short personal drama into a playable Seedance video by automating storyboard → character/prompt prep → segmented generation → ffmpeg merge → (optional) upload.

Open source repo: Harvey-Yuan/seedance_video_demo.

Onsite

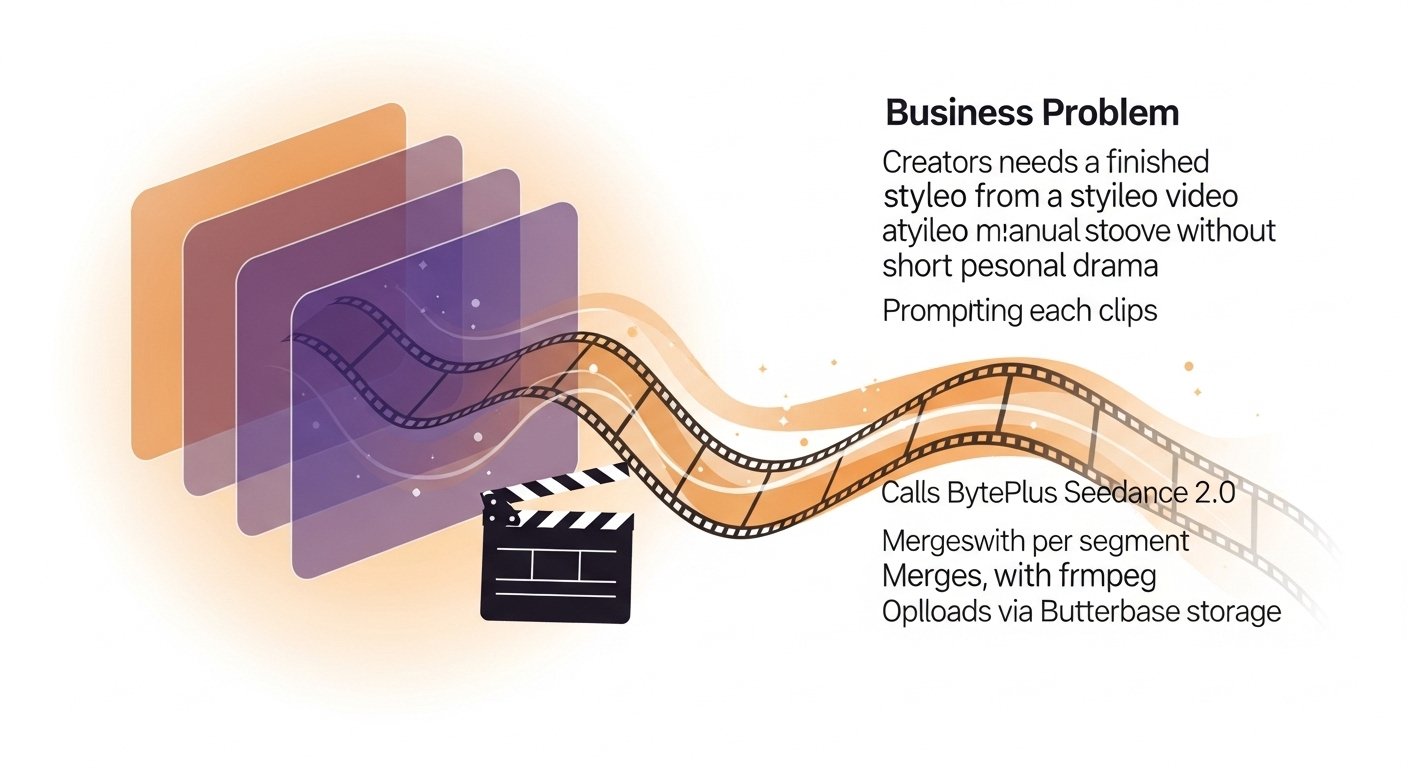

Problem framing

Short narrative videos usually stall on three chores: writing a usable board + lines, crafting per-clip model prompts, and stitching assets into one MP4 with a shareable URL. Seedance is strong at clip generation; orchestration and glue were the hackathon bottleneck.

We split work across Writer / Makeup / Director / Seedance HTTP steps (plus an optional one-shot pipeline background run) behind FastAPI. LLM + storage lean on Butterbase; video + stills use BytePlus ModelArk (Seedance 2.0 + Seedream); merging uses local ffmpeg; long outputs can target Butterbase Storage (watch per-file limits—see RUN.txt).

What the console looks like

Two real screenshots from the Vite UI (npm run dev with the API on uvicorn): storyboard/script focus on the left, run-stage telemetry on the right.

A sample render from the same toolchain

Below is the demo clip re-encoded to 720p H.264 for smooth in-browser playback (source exports can be larger).

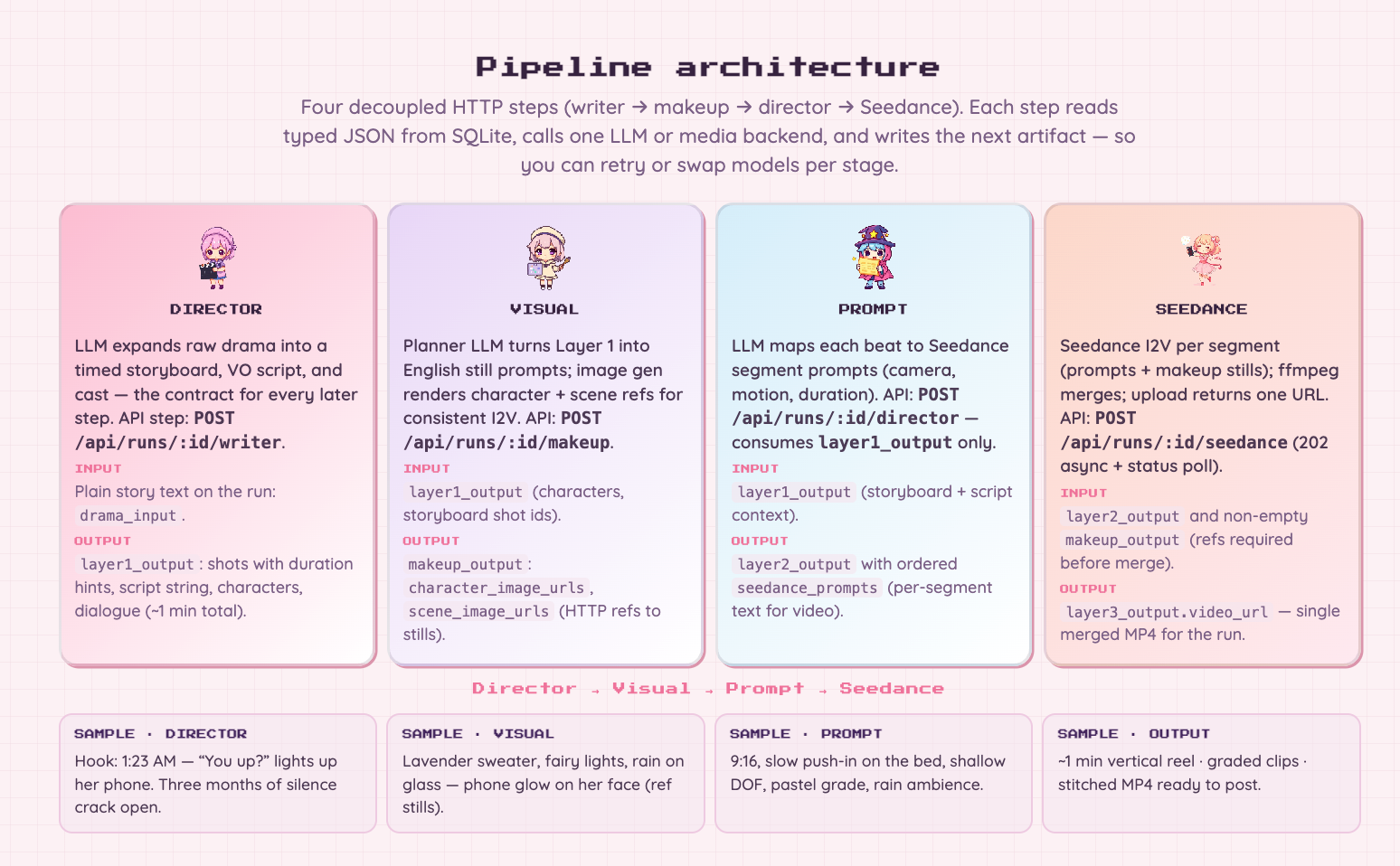

Architecture cheat sheet (from API.md)

| Step | Route (excerpt) | Role |

|---|---|---|

| Create run | POST /api/runs |

Persist drama_input draft |

| Writer | POST /api/runs/{id}/writer |

Layer1 storyboard / script / lines |

| Makeup | POST /api/runs/{id}/makeup |

Character reference image URLs from layer1 |

| Director | POST /api/runs/{id}/director |

Per-segment Seedance prompts from layer1 |

| Merge | POST /api/runs/{id}/seedance (202) |

Async segments + ffmpeg merge + upload |

The recommended call order is explicit in-repo: writer → makeup → director → seedance, so stills exist before clip prompts—aligned with the pixel-love-studio direction. Clients poll GET /api/runs/{id} plus .../seedance/status.

Closing

This is not a single-button model demo; it is a multi-agent contract pipeline that keeps Seedance focused on clip generation while LLMs and engineering handle everything around it. If you extend the flow (human review, alternate video providers), update both contracts/*.schema.json and backend/pipeline_agents.py so the UI and JSON stay in lockstep.

Technology stack

backend:

- FastAPI

- Python 3.11+

- SQLite / Butterbase schema

- ffmpeg

- BytePlus ModelArk (Seedance 2.0, Seedream)

frontend:

- React 18

- TypeScript

- Vite 5

integration:

- Butterbase (LLM + Storage + MCP)